Assessment drives learning. At the University of Sydney, it serves three interconnected purposes: supporting students to act on feedback and improve (assessment for learning), developing students as independent, self-regulating learners (assessment as learning), and determining that learning has taken place (assessment of learning). The Sydney Assessment Framework asks that assessment be valid, inclusive, fair, and regularly reviewed, developing contemporary capabilities in trustworthy and relevant ways. In a context where generative AI is reshaping authentic student work and open assessment tasks are increasingly central to program design, rubrics are more important than ever for making assessment intentions transparent, consistent, and genuinely informative for students.

Why rubrics matter

One of the persistent challenges in higher education is the gap between what assessors know implicitly and what students understand explicitly. Rubrics help close that gap by articulating what academic quality looks like across criteria and performance levels, particularly for students newer to university, where academic expectations are often tacit rather than stated.

When students have access to clear criteria before they begin a task, they can use them to plan, monitor, and evaluate their own work. A recent meta-analysis confirmed a moderate, positive effect of rubrics on academic performance, with additional benefits for self-regulated learning and self-efficacy. Over time, this builds evaluative judgement, which Tai et al. argue is among the most transferable capabilities a university education can develop.

Transparent criteria also benefit equity. Students less familiar with implicit disciplinary norms, including first-generation students and those from diverse educational backgrounds, stand to gain most from assessment expectations that are made explicit, consistent with the Sydney Assessment Framework’s principle that assessment must be inclusive, valid, and fair.

For teaching teams, shared rubric criteria support calibration and provide a documented rationale for academic judgements that can reduce the burden of formal appeals.

Across all three of the University’s assessment purposes, for, as, and of learning, rubrics make standards explicit and actionable.

Two rubric types worth knowing

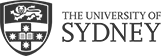

1) Analytic rubrics

Analytic rubrics assess performance across multiple criteria, each with descriptors spanning the Common Grade Scale. This format offers detailed criterion-level feedback and works well for complex tasks where different aspects of performance are meaningfully distinct. Analytic rubrics are particularly powerful when used formatively, for example, as a framework for structured peer feedback or tutor consultations on draft work. Analytic rubrics assess performance across multiple criteria, each with descriptors spanning the Common Grade Scale. This format offers detailed criterion-level feedback and works well for complex tasks where different aspects of performance are meaningfully distinct. Analytic rubrics are particularly powerful when used formatively, for example, as a framework for structured peer feedback or tutor consultations on draft work.

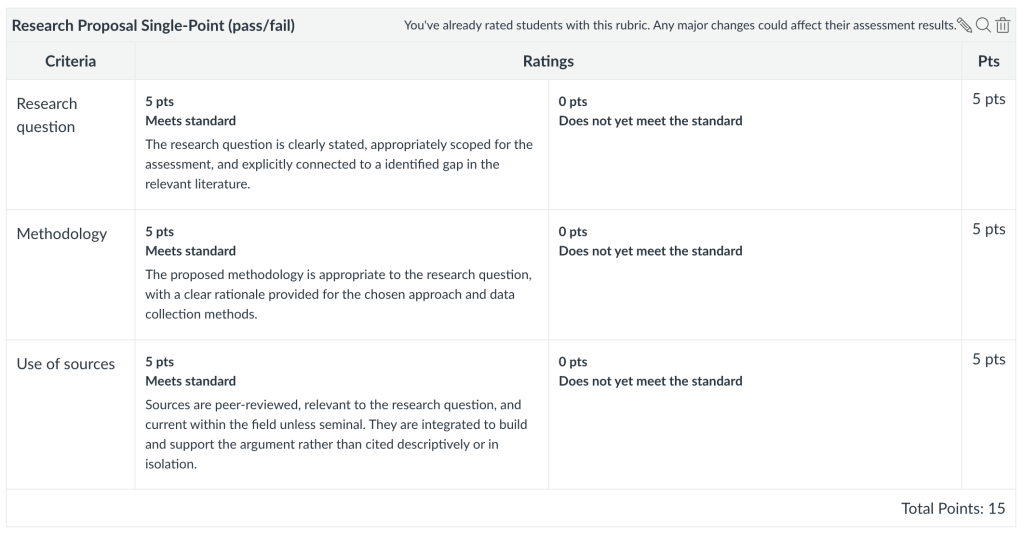

2) Single-point rubrics

Single-point rubrics, associated with Linda Nilson’s specifications grading approach, describe only what meeting the standard looks like for each criterion, leaving space for markers to note where work fell short or exceeded it. Well-suited to pass/fail and hurdle tasks, they also avoid a common pitfall of multi-level rubrics: students calibrating their effort to the minimum described in lower performance bands rather than genuinely aiming for the standard. Used formatively, a single-point rubric is a particularly effective self-assessment tool: students read one clear standard, assess their draft against it, and identify what they still need to address.

Building a strong analytic rubric

- Start with your learning outcomes – each criterion should connect directly to unit learning outcomes. If a criterion cannot be linked to an outcome, it may not belong in the rubric.

- Write criteria that are specific and observable – strong criteria are distinct (minimal overlap), assessable (describing something observable in the work, not intentions or effort), and meaningful (reflecting something that genuinely matters to the learning outcome, not a proxy or formatting requirement).

- Write from the proficient standard outward – draft the descriptor that meets the standard first, then work up and down. This avoids inadvertently designing rubrics around failure.

- e.g., for a use of evidence criterion, you might draft the Credit-level descriptor first (“Claims are supported by relevant evidence from appropriate sources, with brief explanation of relevance”), then extend upward to Distinction (“…critically integrated, with explanation of relevance and limitations”) and downward to Pass (“…some evidence, though sources may be limited or relevance not always clear”).

- Use parallel language across levels – if a criterion addresses argument structure at the proficient level, it should address argument structure at every level. Criteria that shift focus across levels cannot be applied consistently by markers or interpreted meaningfully by students.

- Parallel (works): “Argument is clear and consistently supported” (Credit) → “Argument is clear and well-supported with nuance” (Distinction) → “Argument is somewhat clear but unevenly supported” (Pass)

- Shifting (doesn’t work): “Argument is clear and consistently supported” (Credit) → “Writing demonstrates sophisticated insight” (Distinction) → “Some grammatical errors present” (Pass)

- Build in formative touchpoints – design opportunities for students to engage with the rubric before submission, through self-assessment, peer review, or draft feedback activities. A rubric that students only see after submission has missed most of its potential.

- Test it – apply the rubric to a sample of student work before releasing it. If markers are making different judgments, the descriptors need sharpening.

Co-creating rubrics with students

One of the most powerful and underused approaches is involving students in the design process. Drawing on Stevens and Levi, co-creation involves sharing examples of student work, asking students to identify what distinguishes stronger from weaker responses, and collaboratively drafting criteria language. Students who have participated in defining the standard are better positioned to use the rubric as a genuine self-assessment tool, and the process itself is a rich metacognitive experience, making visible the tacit standards of disciplinary quality that students are often left to infer. For large cohorts, this can be adapted through Canvas discussion boards or tutorial activities.

Where to go from here

The Division of Teaching and Learning offers a range of resources and professional learning opportunities to support you in designing and implementing effective rubrics:

- Modular Professional Learning Framework – Module 5: Assessment and Feedback for Learning — a two-hour online session with pre- and post-tasks, available as part of the 21-module MPLF program.

- Rubric Development and Implementation in Canvas – Workshop — a 90-minute facilitated online workshop offered at the start of each semester. View upcoming dates.

- Customised faculty, school, and program sessions – DTL can design and deliver tailored professional learning to address specific assessment priorities in your area. Get in touch – Division of Teaching and Learning.

- Using Rubrics to Support Teaching, Learning and Assessment – Teaching Resource Hub is a self-paced, resource-rich Canvas module aligned to the Sydney Assessment Framework.